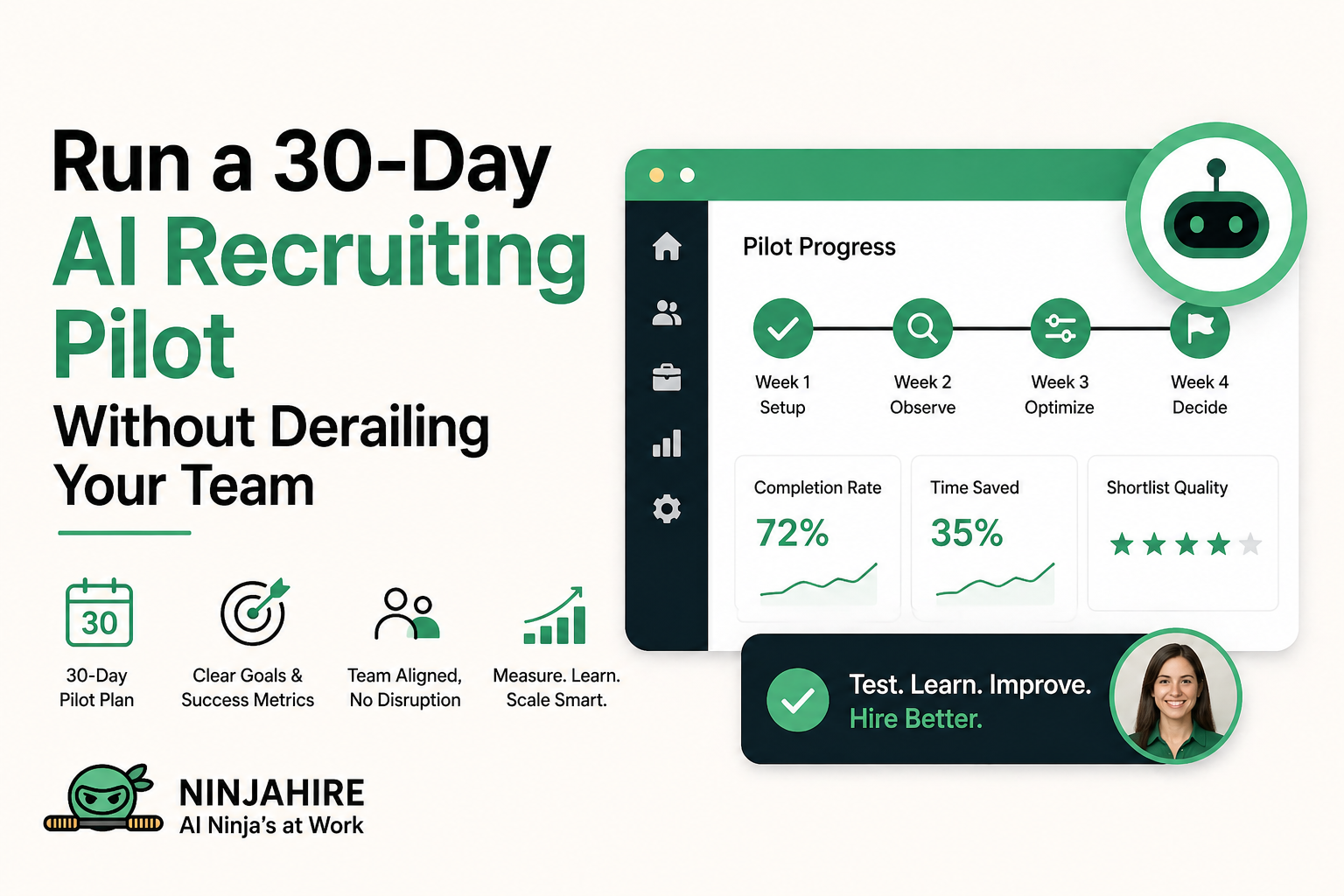

How to run a 30-day AI recruiting pilot without derailing your team

March 15, 2026

What an AI Recruiting Pilot Actually Means

An AI recruiting pilot is a time-bound, structured experiment where a hiring team tests AI-powered screening or workflow automation on a specific role or requisition before rolling it out broadly. It is not a trial license. It is not a demo. It is a controlled test with defined inputs, tracked outputs, and a clear decision at the end. A well-run AI hiring pilot gives you real data on whether AI improves your screening speed, shortlist quality, and recruiter efficiency — without disrupting your entire hiring operation. Most pilots run 30 days and cover a single high-volume or repeatable role.

Why Most AI Recruiting Pilots Fail

Here is the honest reality: most AI recruiting pilots do not fail because the technology is bad. They fail because of how teams approach the experiment. The three most common failure modes are tool-focused thinking, missing baselines, and zero success criteria from day one.

Tool-focused thinking happens when a team treats the pilot like a product demo rather than a workflow redesign. They spend the first two weeks clicking around the platform, exploring features, and never actually running real candidates through the system. By the time they get to actual hiring, the pilot window is gone. The question you should be asking is not what can this tool do — it is how does this tool change what we do and whether that change produces better outcomes.

The baseline problem is equally damaging. If you do not know how long screening currently takes, how many candidates your recruiters review per role, or what percentage of phone screens convert to hires, you have nothing to compare against. You cannot claim the AI saved time if you never measured how much time you were spending. Baselines are not a nice-to-have. They are the foundation of the entire evaluation.

Poor adoption is the third killer. Even if the AI works well, if your recruiters do not trust it, do not use it consistently, or route around it by reviewing candidates manually anyway, your data is contaminated. Adoption has to be baked into the pilot design, not chased after the fact. That means involving recruiters in setup, explaining what the AI is doing and why, and making it easier to use the system than to skip it.

Choosing the Right Role for Your Pilot

The role you pick for your AI hiring pilot will determine whether you get useful data or noise. There are four things to look for: volume, clarity, risk tolerance, and recruiter readiness.

Volume matters because AI screening tools need a meaningful sample size to show any signal. If you are piloting on a role that gets three applications a week, you will not have enough data in 30 days to draw conclusions. Pick a role with at least 50 to 100 applicants expected over the pilot window. Customer support, SDR, operations coordinator, and entry-level technical roles tend to work well for this reason.

Clarity means the role has defined criteria for a good hire. If your hiring manager cannot tell you what a strong candidate looks like in concrete terms — relevant experience, specific skills, measurable past performance — the AI cannot screen for it. Ambiguous roles make for ambiguous pilots. Pin down what good looks like before you configure anything.

Risk is about stakes. Do not run your first AI pilot on a VP-level search or a role that is already three months behind schedule. Start with a role where if the pilot produces imperfect results, the business can absorb it without crisis. This is about learning, not perfection.

Recruiter mindset matters more than people admit. If the recruiter assigned to the pilot role is deeply skeptical of AI, resistant to change, or too busy to engage seriously with the experiment, your results will be skewed. Pick someone who is curious, willing to try something new, and has enough bandwidth to actually track what is happening.

Baseline Metrics You Must Capture Before Starting

Before you turn on a single AI workflow, capture your current state. This is non-negotiable. These numbers become the benchmark against which every AI outcome is judged.

| Metric | Why it matters |

|---|---|

| Time to screen | Measures how long it takes from application to recruiter decision — the core speed metric AI should improve |

| Pass rate from application to phone screen | Tells you what percentage of applicants your recruiters currently advance — a quality baseline for comparison |

| Recruiter time per review | Measures how many minutes a recruiter spends manually reviewing each application — the efficiency benchmark |

| Hiring manager satisfaction with shortlists | A qualitative signal on whether the candidates reaching interview are actually strong fits |

| Candidate drop-off rate | Tracks how many candidates disengage before completing the application or screening — a UX health check |

| Time to first interview | End-to-end pipeline speed from application to first scheduled interview — shows total process efficiency |

Pull this data from your ATS for the last 60 to 90 days on the pilot role. If you cannot find it in your ATS, have the recruiter manually track it for one to two weeks before the pilot begins. Imperfect data is still better than no data.

Defining Success Criteria Before Day 1

This is the step most teams skip and the reason most pilots end in ambiguity rather than decision. Before the pilot starts, you need to write down in plain language what success looks like. Not a vague improvement. Specific, measurable outcomes that would make you confident enough to expand or kill the experiment.

A strong success criteria document answers three questions. First, what does the AI need to achieve for this to be considered a win? For example: screening time decreases by at least 40 percent, recruiter spends less than 30 minutes per day reviewing AI-scored candidates, and hiring manager approves at least 70 percent of the shortlist without requesting additional candidates. These are not aspirational goals. They are pass-fail lines.

Second, what would cause you to stop the pilot early? Define your kill conditions. If the AI is systematically filtering out strong candidates that your recruiter would have advanced, if candidate completion rates drop below 50 percent, or if hiring manager satisfaction with shortlists deteriorates versus baseline — those are stop signals. Having them written down before you start removes the ambiguity when something goes wrong mid-pilot.

Third, who makes the final call? Name a decision-maker. It should not be a committee. One person reviews the results at day 30 and decides: scale, extend, or stop. This prevents the pilot from drifting into a permanent state of maybe.

Keep the success criteria document to one page. Share it with every stakeholder before the pilot starts. If someone disagrees with the criteria, resolve it now — not at the review meeting when someone tries to move the goalposts.

Week 1: Setup and Configuration

Week one is entirely about building the right foundation. The decisions you make here — particularly around question design and scoring — will determine whether your pilot produces useful signal or just noise.

Start with question design. Work with the hiring manager to identify three to five criteria that genuinely differentiate strong candidates from weak ones for this specific role. Turn each criterion into a specific, answerable screening question. Not generic questions like tell me about yourself. Targeted questions like describe a situation where you had to manage competing deadlines with limited resources — and walk me through what you prioritized. Questions that actually reveal capability, judgment, or relevant experience.

Scoring setup comes next. Define what a strong, acceptable, and weak answer looks like for each question. In most AI screening platforms, this becomes the rubric the system uses to score candidates. Spend time here. Vague rubrics produce vague scores. If you cannot describe what a 9-out-of-10 answer looks like in concrete terms, your scoring will be inconsistent and your shortlist will be unreliable.

ATS integration should be configured before you launch. Make sure the AI platform connects properly to your applicant tracking system, that candidate statuses sync automatically, and that recruiter notifications work as expected. There is nothing more damaging to adoption than a clunky handoff between systems that makes extra work for the recruiter.

Before going live, run internal test candidates through the full flow. Have two or three people complete the screening as if they were real applicants. Review their scored responses. Check whether the scoring aligns with how you would manually evaluate those answers. Adjust if needed. Launch day is not the time to discover that your scoring rubric rewards verbose answers over relevant ones.

Week 2: Observation Phase

Week two has one rule: observe, do not adjust. You need clean, uninterrupted data from your initial setup to understand what is actually happening in the pipeline. If you start tweaking scoring thresholds or rewriting questions before you have seen a full week of real applicant responses, you corrupt your baseline and cannot isolate what is driving any changes you see.

What you should be doing instead is tracking patterns. Review every scored candidate. Note the cases where the AI score and your recruiter's instinct agree. Note the cases where they diverge. Look at completion rates — are candidates finishing the screening or dropping off at specific questions? Look at score distribution — are most candidates clustering in the middle range, which usually signals that your questions are not discriminating enough, or are you seeing a healthy spread across the range?

Recruiter feedback is gold during this phase. After your recruiter reviews the AI-scored shortlist each day, ask two questions: were there candidates the AI ranked highly that you would not have advanced, and were there candidates the AI ranked low that you think deserved a closer look? Document these cases. They will become the calibration input for week three.

Also watch for candidate experience signals. If you are using async video or written response screening, are candidates completing it in a reasonable timeframe? Are you getting any direct feedback — positive or negative — through your ATS or candidate communication channels? A great screening process should feel fair and straightforward to candidates, not like an obstacle course.

Week 3: Calibration and Adjustment

Week three is where you take everything you learned in the observation phase and make targeted, documented adjustments. The keyword here is targeted. You are not rebuilding the pilot from scratch. You are making specific changes based on specific evidence.

Start with scoring refinement. If week two showed that a particular question is not producing meaningful differentiation — everyone scores similarly regardless of their actual qualifications — either sharpen the rubric for that question or replace it entirely. If the scoring threshold for advancing candidates is either too tight or too loose relative to hiring manager expectations, adjust it now with a documented rationale.

Address any candidate flow issues you identified. If there is a point in the screening where completion rates drop significantly, investigate why. Is the question unclear? Is the format unfamiliar? Is the time estimate wrong? Make the friction point easier to navigate. Every candidate who drops off before completing the screen is data you lost.

Loop back in the hiring manager. Share a representative sample of AI-scored candidates and their responses. Ask them to rate the quality of the candidates they see. Use that feedback to validate whether your scoring rubric is actually identifying the profiles they want. This conversation often surfaces assumptions that were never made explicit during setup — and surfacing them in week three rather than week four gives you time to fix them.

Document every change you make in week three, including the before state, the change, and the reason. This record becomes essential when you are presenting results and explaining what you learned. It also makes it much easier to replicate the setup if you decide to scale.

Week 4: Results and Decision

Week four is not an extension of the experiment. It is the evaluation phase. By day 28, you should be compiling results against your success criteria. By day 30, someone in the room should be making a call.

There are essentially three outcomes. The first is scale: the pilot hit or exceeded your success criteria, adoption is solid, hiring managers are happy with shortlist quality, and recruiters are spending meaningfully less time on manual review. This is the green light to expand the AI workflow to additional roles. Define which roles, set a timeline, and assign an owner.

The second is extend: the pilot showed genuine promise but hit a specific limitation — maybe one of your questions needs a full redesign, or ATS integration had reliability issues that need to be resolved, or you ran into an unusually slow applicant week that skewed your volume data. In this case, a two-week extension is reasonable. But only extend if you have a concrete fix in progress. Extending a pilot because you are not sure what to conclude is not an extension — it is avoidance.

The third is stop: the AI consistently produced shortlists that hiring managers rejected, recruiter effort did not decrease in a meaningful way, or candidate experience metrics degraded. This is a valid outcome. It means either this tool is not the right fit for your workflow, or the way you configured the pilot needs a fundamental rethink before you try again. Document the reasons clearly and do not let a failed pilot become a sunken cost that nobody is willing to walk away from.

Metrics to Track Throughout the Pilot

Consistent tracking across all four weeks is what separates a real pilot from a casual experiment. Here are the metrics that matter most, where to get them, and what they tell you.

| Metric | Source | Impact |

|---|---|---|

| Completion rate | AI platform dashboard | Shows candidate engagement and screening friction |

| Shortlist quality rating | Hiring manager weekly feedback | Directly measures hiring success and scoring accuracy |

| Time saved per recruiter per week | Recruiter self-tracking vs baseline | Core efficiency metric — the most visible ROI signal |

| Score-to-advance alignment rate | Comparison of AI scores to recruiter decisions | Measures how well the AI scoring aligns with human judgment |

| Time to first interview | ATS timestamps | End-to-end pipeline speed improvement |

| Candidate satisfaction or experience signal | Candidate survey or drop-off data | Flags whether the AI process helps or hurts employer brand |

Log these weekly, not monthly. Patterns that emerge early — like a consistent drop-off at question three, or hiring managers consistently rejecting the top-scored candidates — need to be visible quickly enough to act on in week three. Weekly tracking is what gives you that window.

Common Mistakes During AI Recruiting Pilots

Even teams that do most things right tend to fall into a few consistent traps. Knowing them in advance is the best way to avoid them.

Over-adjusting too early is probably the most common mistake. A recruiter sees a few AI scores that feel off in week one and immediately starts tweaking the rubric. The problem is that one or two edge cases do not represent a pattern. If you change your scoring criteria every time you see a result you disagree with, you never develop a stable enough configuration to evaluate. Hold your changes until week three, and make them based on documented patterns, not individual outliers.

Ignoring recruiter feedback goes the other way. Some teams get so focused on tracking quantitative metrics that they forget the recruiter using the system every day is generating the most valuable qualitative signal available. If your recruiter is consistently saying the AI is surfacing candidates that feel wrong for the culture or the role, that is a data point. Capture it and investigate it — do not dismiss it because it does not fit neatly into a spreadsheet.

Unclear ownership derails more pilots than any technical issue. If nobody knows who is responsible for reviewing the weekly metrics, who owns the configuration, or who has the authority to make adjustments, things drift. Assign a pilot owner on day one. That person does not have to do everything, but they are accountable for keeping the experiment on track and making sure the decision gets made at day 30.

No documentation is the mistake you feel most painfully at the end. If you did not write down your initial configuration, the changes you made in week three, the reasons for those changes, and the week-by-week metric movement, your results meeting turns into a debate about what actually happened. Documentation is what allows you to learn from the pilot, replicate the successes, and avoid repeating the failures on the next role.

Building Internal Buy-In Before and During the Pilot

Technology adoption in recruiting almost always lives or dies on trust — specifically, whether the people who use it every day believe it is actually making their jobs better. You cannot mandate buy-in. You have to build it, and the window for building it is mostly before the pilot starts.

Start by involving the recruiter in the setup process, not just as a user, but as a co-designer. Let them weigh in on the screening questions. Ask them what they would want to see in a good AI-scored response. When people have input into how something is built, they are far more likely to give it a fair chance when it goes live.

Be transparent with the recruiter about what the AI is and is not doing. The biggest source of recruiter skepticism about AI screening is the fear that it is a black box that will override their judgment. Make it clear that the AI is a filter and a prioritization tool — it is surfacing candidates for them to review faster, not replacing their evaluation. When recruiters understand that the AI is working for them rather than around them, adoption tends to follow naturally.

Stakeholder alignment is a different challenge. Hiring managers want assurance that the candidates reaching them will be worth their time. HR leadership wants to know there is a governance process and that the AI is being used responsibly. Executives want to see ROI potential. Each of these audiences needs a slightly different message. Before the pilot launches, have a brief conversation with each stakeholder group — not a formal presentation, just a clear explanation of what you are testing, why, and what you will do with the results.

Communicate during the pilot, not just at the end. A quick weekly update to stakeholders — two to three sentences on how the pilot is tracking — keeps everyone informed and builds confidence that someone is paying attention. It also means that when you walk into the day-30 results meeting, nobody is hearing the outcomes for the first time.

Most AI recruiting pilots fail because teams test the tool instead of redesigning the workflow. The technology is usually not the problem. The process design, the success criteria, and the adoption plan are where most pilots come apart — and all three are entirely within your control before you even turn the system on.

Key Takeaway

A well-run AI recruiting pilot is fundamentally an act of organizational learning — not just a performance test. Whether the AI delivers a dramatic efficiency improvement or falls short of expectations, the 30-day pilot process forces your team to clarify what good hiring looks like, document how you currently work, and think seriously about what you would actually need to change to get better results. The teams that get the most out of AI recruiting are rarely the ones with the most sophisticated technology. They are the ones who ran a disciplined pilot, documented what they learned, and used that knowledge to make a clear, confident decision about how to move forward. Treat the pilot as the beginning of a smarter hiring process — not just as an evaluation of a tool.

Run your first AI recruiting pilot with confidence

Try for free.png)

.jpg)

.png)