Candidate experience benchmark: AI vs human screening across industries

.jpeg)

March 15, 2026

Recruiting Operations • Benchmark Research • AI Hiring

Candidate Experience Benchmark: AI vs Human Screening Across Industries

The headline assumption is that human recruiters make candidates feel valued and AI screening makes them feel processed. The data is more complicated than that, and understanding why matters enormously if you are responsible for hiring at scale.

Most recruiting leaders have a version of the same intuition: people want to talk to people. When a candidate sits down for a conversation with a skilled recruiter who listens carefully, asks perceptive follow-up questions, and treats the conversation like a genuine exchange rather than a checklist audit, the experience tends to be positive. That part is true. What is also true is that a rushed recruiter on their twentieth screening call of the day, working from a template they stopped reading months ago, delivers a candidate experience that is measurably worse than a well-designed automated workflow.

This is the tension at the center of any honest benchmark comparing AI and human screening: quality of experience is not determined by the type of interviewer, but by the quality of design behind the process. The medium matters less than the structure, the communication, and the care taken at each decision point. That said, medium does matter in some contexts, with some candidate profiles, and in some industries more than others. Understanding those distinctions is what recruiting teams actually need.

Why Candidate Experience Benchmarking Deserves More Attention

Candidate experience has been treated as a soft metric for too long. It gets measured infrequently, reported aspirationally, and rarely connected back to hard recruiting outcomes. That is starting to change, partly because of scale pressures and partly because the cost of poor candidate experience has become harder to ignore.

In high-volume environments like BPO hiring in India or logistics recruiting across the UK and US, companies are processing tens of thousands of applications per quarter. If a candidate exits your process feeling frustrated or disrespected, they may not come back for a future role. They may tell others in their network. In consumer-facing businesses, they may reduce their own engagement with the brand. The downstream effects are real, even if they are hard to isolate in a spreadsheet.

Candidate Net Promoter Score has emerged as a practical shorthand for experience quality. Borrowed from customer experience, candidate NPS asks a simple question: on a scale of zero to ten, how likely are you to recommend this application process to a friend or colleague? Scores above fifty are considered strong in most recruiting benchmarks. Scores below twenty signal structural problems worth addressing. What makes candidate NPS useful in an AI versus human context is that it captures overall perception, not just satisfaction with the outcome. A candidate who was rejected but treated well can still give a high NPS score. A candidate who advanced but found the process confusing, impersonal, or technically broken may give a low one.

How Candidate Experience Data Is Collected and What It Actually Measures

The most reliable candidate experience data comes from post-screen surveys delivered within 24 to 48 hours of the screening interaction. Response rates drop off significantly after 72 hours, particularly in mobile-first candidate pools where the application experience is already fragmented across devices and notification streams.

Beyond NPS, the most operationally useful data points include perceived fairness of the questions, clarity of instructions, technical reliability of the platform, confidence that the process reflected their actual capabilities, and whether they received meaningful communication about next steps. When these dimensions are tracked separately, it becomes possible to diagnose exactly where a process is underperforming, rather than simply knowing that candidates are dissatisfied.

One important methodological note: candidate experience data is subject to significant outcome bias. Candidates who advanced to the next stage rate their experience markedly higher than candidates who were screened out, even when they went through the exact same process. This is not unique to AI screening. It is a feature of how humans process fairness and feedback. Responsible benchmark analysis controls for this by separating outcome cohorts before drawing any conclusions about process quality.

Post-screen surveys delivered within 24 hours yield 3 to 4 times higher response rates than those sent 72+ hours after the interaction. For accurate candidate experience data, timing of the survey is nearly as important as the questions themselves.

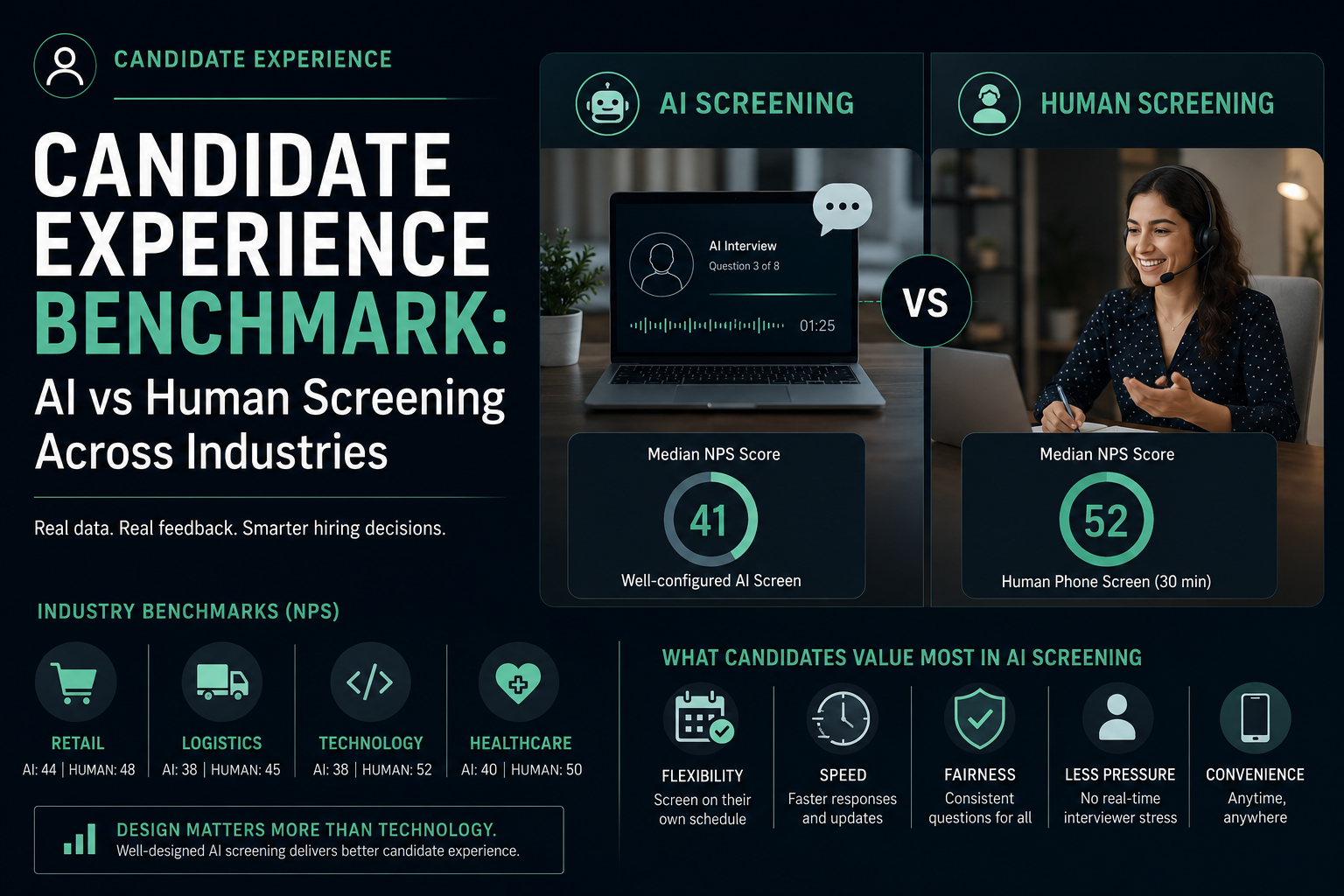

Overall Benchmark Findings: AI vs Human Screening

Across industries and candidate profiles, the aggregate pattern in candidate experience data is more nuanced than either AI advocates or skeptics typically acknowledge. Averaged across all contexts, human recruiter screening tends to score higher on warmth, perceived personal investment, and satisfaction with the conversation itself. AI screening tends to score higher on scheduling convenience, perceived question fairness, speed of process, and consistency of experience across demographic groups.

The important caveat is variance. Both modalities show wide distributions in candidate experience scores, and the overlap between well-designed AI screening and well-designed human screening is substantial. Poorly designed AI screening consistently scores lower than any other category. Poorly designed human screening, however, is not far behind. Candidates who encounter a recruiter who seems distracted, asks questions irrelevant to the role, or fails to explain the process at all are nearly as dissatisfied as candidates who encounter a glitchy AI interview platform with generic prompts.

This finding should reframe how recruiting teams think about the investment question. The decision to deploy AI screening is not really about AI versus human. It is about whether the AI screening process has been designed with the same rigor that a well-designed human screening process demands.

Process Design Is the Variable That Actually Matters

There is a temptation to treat the AI versus human question as primarily a technology decision. It is not. It is primarily a process design decision. The technology is a delivery mechanism. What gets delivered, how it is communicated, what happens before and after the screening interaction, and how candidates are treated when things go wrong, those are the design decisions that drive experience quality.

Consider two AI screening deployments in the same industry. In the first, candidates receive an automated invitation email with minimal context about what to expect, encounter questions that feel copied from a generic competency library, and receive a rejection email three weeks later with no explanation. In the second, candidates receive a detailed, human-sounding explanation of the process, encounter questions clearly tailored to the role, receive an instant acknowledgment after completing the screen, and get a status update within five business days regardless of outcome. The technology is the same. The candidate experience is categorically different.

This is not a theoretical distinction. Recruiting teams that invest in communication design around their AI screening, including pre-screen briefings, transparent AI disclosures, and structured follow-up communication, consistently outperform teams that treat the AI platform as a self-contained hiring tool requiring no additional process design.

Retail and BPO Hiring: Where AI Screening Performs Strongly

High-volume retail and BPO environments represent the clearest case for AI screening from a candidate experience standpoint. In India's BPO sector, which processes millions of entry-level applications annually, AI screening has demonstrated measurable improvements in candidate satisfaction compared to historical human screening baselines, primarily because the human screening baseline was so poor to begin with.

Recruiter-to-applicant ratios in large BPO hiring operations often run at 1:300 or higher during peak intake cycles. At that ratio, the idea of a meaningful human conversation is a fiction. Candidates waited days for callback confirmations. Screening calls were often rescheduled multiple times. The experience was not warm or personal; it was simply slow and opaque. AI screening, in this context, delivered immediacy that candidates valued. They could complete a screen at 9pm from their phone. They received confirmation within seconds. The process moved forward without the administrative delays that had previously defined the experience.

Similarly, in retail hiring across the UK and US, where frontline roles turn over frequently and candidate pools are broad, AI screening has consistently scored well on fairness and neutrality. Candidates from non-traditional educational backgrounds who might have faced unconscious bias in a human phone screen report feeling that AI screening gave their answers a fair evaluation. Whether that perception fully reflects reality is a more complicated empirical question, but from a candidate experience standpoint, the perception of fairness matters as much as fairness itself.

Where Retail AI Screening Breaks Down

Retail AI screening does encounter meaningful friction around multilingual and low-literacy candidate segments. In geographies with diverse linguistic profiles, such as parts of India where candidates may switch between regional languages and English mid-conversation, early AI screening platforms struggled to parse mixed-language responses accurately. Candidates who received poor feedback or felt their answers were misunderstood reported significantly lower experience scores.

More sophisticated multilingual screening systems that handle code-switching have partially addressed this, but the gap persists in platforms that are English-only or that default to penalizing non-standard speech patterns. For recruiting teams operating across linguistically diverse markets, this remains an area where process design needs to account for candidate reality rather than assuming a uniform communication profile.

Logistics and Warehousing: Speed Above Everything

In logistics and warehousing recruitment, candidate experience research reveals a striking simplicity of preference. More than any other sector studied, logistics candidates prioritize speed and clarity above warmth or perceived personalization. A candidate applying for a warehouse operative role in a fulfillment center is typically also applying to two or three competing employers simultaneously. The employer who reaches them first with a clear next step has a structural advantage.

AI screening in this context functions primarily as a speed mechanism. When configured correctly, it collapses the gap between application and qualification decision from days to hours. Candidate NPS in logistics AI screening tends to track very closely with time-to-decision. Faster decision, higher score. This relationship is stronger than in almost any other sector, which reflects the labor market dynamics of the sector: candidates are often making near-simultaneous decisions, and the process that respects their time most effectively wins.

Human screening in logistics, by contrast, frequently loses candidates to dropoff before the first call is even scheduled. Callback lag of more than 48 hours correlates strongly with candidate ghosting, particularly among younger applicant pools who have been conditioned to expect near-instant digital communication. This is not a knock on human recruiters; it is a reflection of the structural mismatch between traditional recruiting workflows and modern candidate expectations in a competitive hourly labor market.

Technology Industry Candidates: The Skeptics in the Room

If logistics and retail represent AI screening's strongest reception, the technology sector represents its most challenging audience. Candidate experience data from US tech recruitment consistently shows that mid-to-senior level technology candidates are more skeptical of AI screening than almost any other demographic group. The reasons are worth understanding carefully, because they are not what you might expect.

The issue is not primarily that tech candidates dislike technology. Many are enthusiastic about AI in other contexts. The issue is that technology candidates, particularly software engineers and product managers, tend to have strong opinions about how AI should be built and deployed, and they apply those opinions critically to the tools used to evaluate them. An AI interview platform that appears to use simplistic natural language processing, asks questions that feel algorithmically generic, or fails to adapt to the depth or nuance of a candidate's response signals to a technically informed candidate that the hiring organization may not be especially thoughtful about how it builds and deploys systems.

This creates an experience problem that is genuinely difficult to solve through process design alone. The AI screening tool itself must be substantively good enough to withstand the evaluation of candidates who know what well-designed AI looks like.

Senior Tech Roles and the Personalization Gap

For senior technology hiring in the US and UK, candidate experience data shows a pronounced preference for human interaction at the screening stage, not necessarily because AI is perceived as unfair, but because senior candidates expect a recruiting conversation to demonstrate that the organization has studied their background, understands their career arc, and has thought carefully about whether the role is genuinely right for them. AI screening at its current state of deployment rarely delivers that level of personalization.

Where technology companies have had better results is in deploying AI screening for early-stage technical assessments, such as coding challenges or analytical problem sets, where the evaluation criteria are objective and candidates understand that automated assessment is standard practice. The experience scores for AI-delivered coding assessments are substantially higher than for AI-delivered behavioral or competency interviews, because the format-function match is better understood by candidates.

Healthcare Hiring and the Trust Question

Healthcare recruiting across the UK and US presents a distinct set of candidate experience challenges. Clinical and allied health candidates applying for roles in NHS-affiliated organizations or large US hospital systems bring a particular sensitivity to the screening process, rooted in professional culture that places high value on human judgment in high-stakes decisions.

For many healthcare candidates, an AI screening process raises questions that go beyond personal preference. If the organization makes consequential patient-facing decisions using rigorous human judgment, why is it using automated screening to decide who gets to demonstrate their fitness for the role? This is a coherent concern, and candidate feedback data from healthcare hiring reflects it. Clinical candidates who encounter AI screening without clear explanation of how it is used in the overall process, and without assurance of human review, report significantly lower confidence in the fairness of the assessment.

Healthcare organizations that have achieved stronger candidate experience scores with AI screening share a common design choice: they are explicit that AI screening is used for initial scheduling and structured information gathering, with all qualification decisions reviewed by a human recruiter. This framing does not eliminate skepticism but it substantially reduces the concern that a machine is making unilateral hiring decisions about clinical professionals. The technology is framed as an administrative tool rather than a judgment system, and that framing matters.

What Candidates Dislike Most About AI Screening

Post-screen survey data across industries and geographies converges on several recurring pain points in AI candidate experience. Understanding these is essential for any recruiting team planning to deploy or improve AI screening workflows.

Generic questions are the most commonly cited frustration. When candidates complete an AI screen and feel that the questions had nothing to do with the specific role they applied for, satisfaction scores drop sharply. This is often a configuration problem rather than a platform limitation. Many AI screening deployments use default question banks that are not customized to the role, level, or function. Candidates can tell. The experience feels like answering a survey written for nobody in particular.

No opportunity for clarification is the second most common complaint. In a human conversation, a candidate can say they are not sure what the interviewer means, or provide context that reframes their answer. In most AI screening formats, the question is presented and the clock starts. Candidates who felt a question was ambiguous and had no mechanism to address that ambiguity before answering report substantially lower perceived fairness scores.

Technical problems have an outsized impact on candidate experience relative to their frequency. A candidate who encounters audio dropout, recording failures, or platform instability during an AI screen is unlikely to complete the process and extremely unlikely to rate the experience positively if they do. Critically, the frustration is often directed at the company, not the platform. The recruiting organization is responsible for the tool it chose and deployed, and candidates rightly hold it accountable for technical failure.

Feeling filtered by software is a subtler but significant concern. Candidates who sense that a scoring algorithm is determining their fate based on vocabulary patterns, speech pace, or facial expressions report lower trust in the process regardless of outcome. This concern is most pronounced among candidates from non-native English speaking backgrounds and those from underrepresented groups who are already attuned to the ways recruiting processes can disadvantage them.

What Candidates Prefer About AI Screening

The negative dimensions get more attention, but candidate experience data also surfaces genuine preferences for AI screening in specific contexts.

Scheduling flexibility is the most consistently cited advantage. Candidates who could complete an AI screen at a time that suited them, without coordinating availability with a recruiter, report significantly higher satisfaction with the process logistics than candidates who went through traditional phone screen scheduling. For candidates managing shift work, caregiving responsibilities, or multiple simultaneous job searches, the ability to complete a screening step at 10pm on a Tuesday matters.

Reduced interview pressure is reported by a meaningful proportion of candidates, particularly early-career applicants and those who experience social anxiety in live conversations. The asynchronous nature of most AI video screening removes the real-time performance pressure of a live call. Some candidates report feeling they could think more carefully about their answers and present their experience more clearly than they might in a synchronous conversation.

Perceived structural fairness of consistent questions is valued by candidates who have had negative experiences with inconsistent human screening. Knowing that every candidate was asked the same questions, in the same format, with the same time constraints, reads as procedurally fairer to many applicants than a human interview where the conversation might take different directions depending on the recruiter's mood or interest.

Faster communication about process status significantly improves candidate experience scores, particularly at the top of the funnel. AI-triggered acknowledgment messages that confirm receipt of a screening response and provide a realistic timeline for next steps dramatically reduce candidate anxiety compared to processes where candidates complete a screen and then hear nothing for a week.

The Speed-Experience Tradeoff: What Throughput Costs You

One of the underexamined tensions in AI screening is the tradeoff between throughput and relationship quality. AI screening enables recruiting teams to process significantly more candidates in significantly less time. This is the headline efficiency benefit, and it is real. The question worth asking carefully is what happens to candidate experience at the bottom of the funnel when the top of the funnel is very wide.

When AI screening dramatically increases the volume of candidates who advance to human review stages, those human review stages face new pressure. Recruiters who were previously spending 40 minutes on a careful phone screen now have four times the volume to assess. If resourcing does not scale with throughput, the human interaction that candidates do experience becomes rushed and lower quality, creating a bottleneck that transfers the experience problem from the AI stage to the human stage.

Teams that manage this transition well tend to redesign their full-funnel workflow before deploying AI screening, not after. They think about where human attention delivers the most candidate experience value, concentrate recruiter time there, and use AI screening as the mechanism that makes concentrated human attention possible, not the mechanism that replaces it entirely.

The recruiting teams with the strongest candidate experience scores are not those who deployed AI screening and removed human touchpoints. They are the teams who deployed AI screening and redeployed human attention to the moments where it matters most.

How High-Performing Recruiting Teams Improve AI Candidate Experience

Across industries, the recruiting teams that achieve consistently strong candidate NPS in AI screening workflows share a set of operational practices that are worth examining closely. None of them are especially complex. All of them require deliberate investment in process design rather than simply deploying a platform and expecting experience quality to follow.

Transparency and Communication Design

The single most impactful improvement in AI candidate experience is upfront transparency. Candidates who receive clear, honest communication before their AI screening session about what format to expect, how their responses will be used, and what role AI plays in the overall decision process report higher satisfaction across nearly every subsequent dimension of the experience, including dimensions that have nothing to do with the information provided.

This is partly a trust effect: when an organization is transparent about its process, candidates extend more benefit of the doubt when something goes wrong. It is also partly an expectation-setting effect: candidates who know what to expect from an AI screen are less likely to be jarred by the format, less likely to experience the absence of live conversation as a red flag, and more likely to approach their responses with appropriate preparation.

Practically, this means the candidate-facing language around AI screening should be written by someone who thinks carefully about candidate psychology, not generated from a platform template. The difference between feeling like you are walking into a process you understand and feeling like you have been dropped into a black box is often just a few paragraphs of well-written communication at the start of the sequence.

Human Escalation Pathways

A practice that distinguishes high-performing AI screening deployments from average ones is the presence of a clear human escalation pathway for candidates who encounter problems, who have access needs, or who simply feel the AI format is not giving them a fair opportunity to represent their qualifications.

This does not have to be a high-cost intervention. It can be as simple as a clearly communicated email address monitored by a recruiter, or a form submission that triggers a human review of the candidate's application. What matters is that candidates know the pathway exists before they begin the AI screen. The presence of the option, even when rarely used, significantly increases perceived fairness and organizational care.

Hybrid AI Plus Recruiter Workflows

The most durable candidate experience improvements come from workflow designs that treat AI and human interaction as complementary rather than substitutional. In practice, this typically means using AI screening to handle structured competency assessment, scheduling, and initial information gathering, while using recruiter interaction to handle role-specific conversation, candidate questions, and relationship-building at the point in the process where candidates are being seriously considered.

UK graduate hiring programs at large professional services organizations have developed some of the more thoughtful hybrid models in this area. AI screening handles first-stage competency assessment at high volume in September and October. Candidates who advance receive a short human follow-up call, sometimes just ten to fifteen minutes, that serves less as an assessment and more as an opportunity for the candidate to ask questions and hear a human voice representing the organization before committing to a more intensive assessment center process. Candidate NPS in these hybrid models consistently outperforms both fully automated and fully human screening workflows at equivalent volume levels.

Industry-Specific Recommendations

For retail and BPO hiring, the priority should be mobile optimization, speed of response, and multilingual capability. Candidates in these markets often complete applications on smartphones with variable connectivity. AI screening platforms that are not mobile-native create friction that shows up clearly in dropout and experience data. Investing in platform selection criteria that prioritize mobile performance and language flexibility pays dividends in candidate satisfaction and completion rates.

For logistics and warehousing, time-to-decision is the dominant variable. Every additional day between application and first contact is a day during which a candidate may accept an offer elsewhere. AI screening configured to produce same-day or next-day qualification decisions, with immediate automated communication of next steps, directly addresses the most common candidate frustration in this sector. The content quality of the screen matters less than its speed and its communication design.

For technology hiring, the recommendation is selective deployment. AI screening works well at the technical assessment stage for junior roles where standardized evaluation is appropriate and expected. For mid-to-senior roles, the evidence suggests that leading with AI screening before any human contact is a candidate experience risk, particularly when the talent market for the role is competitive. A brief human intake call before AI technical assessment, or an AI-assisted but recruiter-reviewed competency assessment, tends to perform better in candidate NPS than fully automated early-stage screening for technical professionals.

For healthcare hiring, transparency and human review assurance are non-negotiable for clinical roles. AI screening can be deployed effectively for administrative and support role categories where the professional culture norms around AI in decision-making are less sensitive. For any clinical role, the organization should be able to state clearly that AI is used for structured information gathering and scheduling only, and that all qualification decisions are made by humans. That statement, when credible, significantly improves candidate trust.

Remote Hiring Trends and What They Mean for AI Screening

The growth of distributed and remote hiring has materially changed the baseline expectation candidates bring to AI screening. In markets where fully remote application processes are standard, candidates are more accustomed to asynchronous digital interaction and less likely to experience AI screening as an unusual or alienating choice. This is particularly true in US technology hiring and UK professional services roles where remote work norms were deeply embedded during and after the pandemic period.

Conversely, in sectors and geographies where in-person hiring has remained dominant, such as parts of the Indian retail market or US healthcare systems with strong on-site working cultures, AI screening can feel more dissonant because it sits against a broader context of expected face-to-face interaction. Understanding your candidate market's baseline expectations around digital versus in-person process is essential context for predicting how AI screening will land in a given deployment.

Remote hiring trends have also accelerated the development of AI screening technology itself. Platforms developed to serve distributed hiring pipelines tend to have better asynchronous interview design, more robust connectivity fallback systems, and more flexible scheduling architecture than platforms originally built for supplementing in-person processes. For teams evaluating AI screening software, the platform's remote-native heritage is often a useful proxy for overall design quality.

How Candidate Experience Shapes Employer Brand

One dimension of AI screening candidate experience that does not receive enough operational attention is its effect on employer brand perception, particularly in tight talent markets. Candidates talk. Online review platforms where candidates share hiring experiences have made this more visible, but the underlying dynamic predates review sites. A candidate who has a notably positive or notably negative experience in an AI screen will tell people they know in their professional network.

For organizations hiring in specialized talent communities, the candidate experience in AI screening is not just a metric; it is a signal about organizational culture and values that circulates in communities the employer may not have direct visibility into. Technology talent communities in particular are adept at sharing information about which employers' processes are respectful and which are not, and that information influences application decisions long before an individual reaches the screening stage.

This is one reason why investing in AI screening process design is not simply a candidate satisfaction project. It is a talent pipeline project. The candidates who hear from peers that your AI screening process is well-designed, fair, and communicative are more likely to apply in the future. The candidates who hear that your AI screen is confusing, generic, or opaque may remove you from consideration before the process begins.

The Future of AI Candidate Experience

The trajectory of AI screening technology suggests that several of the most persistent candidate experience problems are technically solvable, though solving them requires investment and intentionality from both platform providers and recruiting teams deploying the tools.

Adaptive questioning, where AI screening dynamically adjusts follow-up questions based on candidate responses, addresses the generic question complaint that shows up most consistently in negative feedback. Platforms that have implemented adaptive questioning in controlled settings report higher candidate satisfaction with question relevance, which is one of the strongest predictors of overall screening experience. The technical challenge is ensuring that adaptation improves the experience consistently across candidate profiles rather than introducing new forms of differential treatment.

Improved multilingual and multimodal assessment capability will progressively address the experience gaps that currently disadvantage candidates from non-English dominant backgrounds or those whose communication style does not fit the implicit norms of current scoring models. This is genuinely important work both for candidate experience and for the validity of assessments that claim to measure competence but may be measuring linguistic conformity.

The relationship between AI screening and human recruiter interaction will likely evolve toward tighter integration rather than cleaner separation. The most promising emerging models treat AI not as the first gate before human contact, but as the structured backbone of an assessment process where human judgment and relationship-building are woven in at specific design-intended moments. This requires recruiting organizations to invest in workflow design capability alongside technology capability, which is a more demanding ask but also a more durable source of competitive advantage in candidate experience.

What the Benchmark Actually Tells Us

The candidate experience benchmark across AI and human screening ultimately tells a story about process quality, not technology preference. The strongest AI screening deployments deliver candidate experience that is comparable to, and in some operational contexts better than, human screening delivered at scale. The weakest AI screening deployments deliver experiences that are worse than almost any alternative. The same distribution exists for human screening. Good process design, executed with attention to candidate psychology and operational reality, produces good experiences regardless of the medium.

What is different about AI screening is the nature of its failure modes. When a human recruiter delivers a poor experience, it typically affects one candidate at a time. When an AI screening workflow is poorly designed, it delivers the same poor experience to every candidate who enters it, consistently and at scale. This is both AI screening's strength and its risk: standardization amplifies quality in both directions.

For recruiting leaders, the practical implication is clear. The decision to deploy AI screening should be followed immediately by a commitment to invest in the process design, communication architecture, and human workflow integration that determine whether that screening delivers a consistently good experience or a consistently poor one. The technology choice is secondary. The design investment is primary. And the candidates, as the benchmark data consistently shows, are quite good at telling the difference.

Design the Hiring Experience Your Candidates Actually Deserve

The best AI hiring experiences are not fully automated. They are transparent, thoughtfully designed, and supported by the right human touchpoints at the right moments.

Try AI-powered screening for free.png)

.jpg)

.png)