AI transcription accuracy in interviews: what the error rates mean for your hiring decisions

.jpeg)

March 15, 2026

AI Transcription Accuracy in Interviews: What the Error Rates Mean for Your Hiring Decisions

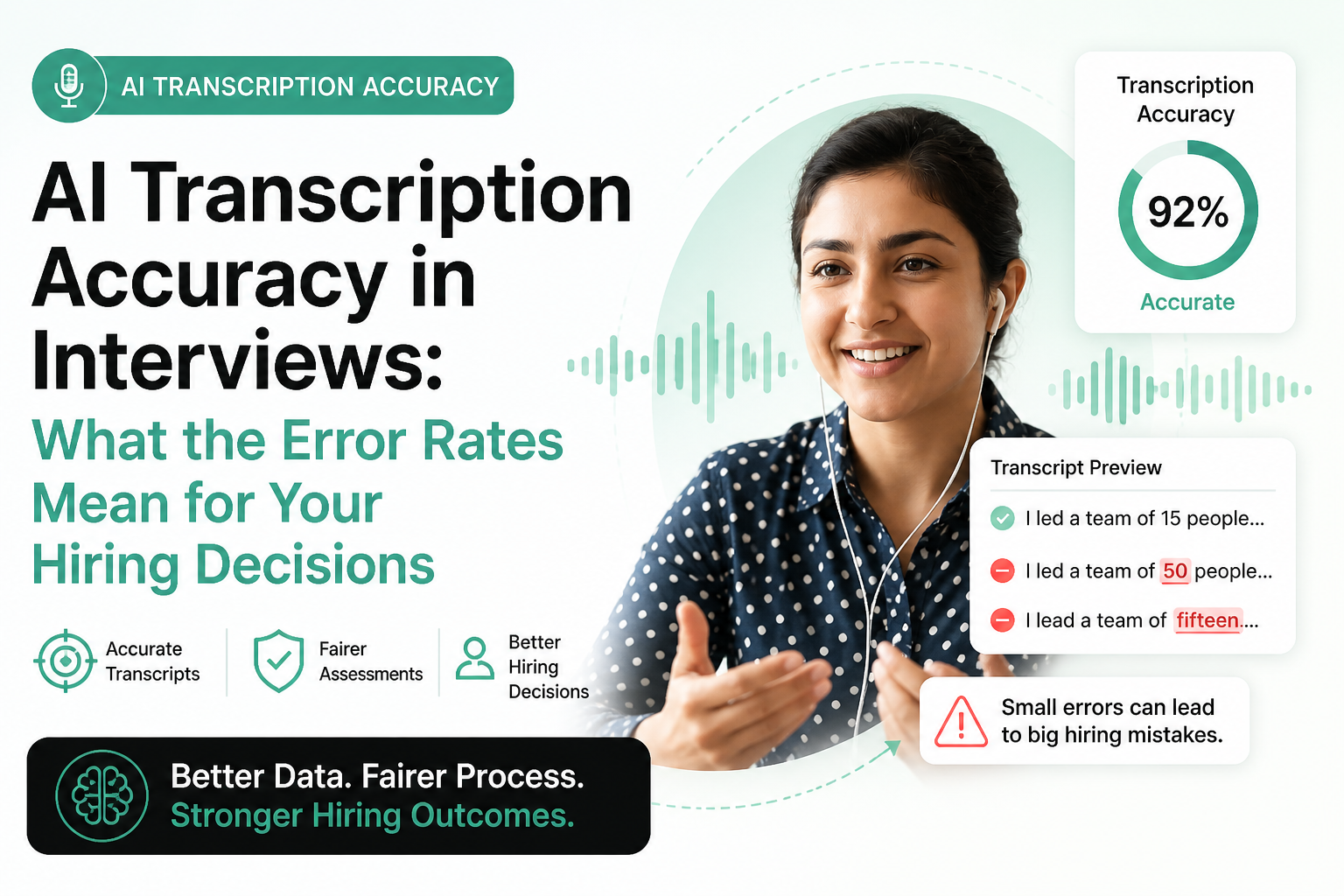

Every time an AI system transcribes a candidate interview, it makes judgment calls. It decides whether someone said "led" or "read," whether a sentence ended or continued, whether a technical term was spoken correctly or mangled in transit. Most of those calls are right. Some are wrong. And the ones that are wrong don't announce themselves — they sit quietly in the transcript, shaping how a recruiter reads a candidate's answers, how a hiring manager scores communication quality, and ultimately whether someone gets the job.

This isn't a hypothetical risk. AI transcription accuracy in hiring is a measurable variable with documented error rates, known failure patterns, and real consequences for the people on the receiving end of those errors. Understanding what those error rates actually mean — not just as a percentage on a vendor benchmarking sheet, but as a force acting on your hiring decisions — is the difference between using AI transcription intelligently and assuming it's doing something it isn't.

What transcription accuracy actually means in a hiring context

Transcription accuracy is typically measured as Word Error Rate, or WER. The formula is straightforward: the number of substitutions, deletions, and insertions required to convert the AI's output into the correct transcript, divided by the total number of words in the correct transcript, expressed as a percentage. A WER of 5% means roughly 1 in 20 words was transcribed incorrectly. A WER of 15% means nearly 1 in 7 words contains an error.

In isolation, those numbers sound manageable. In practice, they aren't uniformly distributed across speakers, accents, audio conditions, or vocabulary types. A system with an overall WER of 8% might produce near-perfect transcripts for a native American English speaker in a quiet room with a high-quality microphone, while generating a WER above 25% for a candidate with a strong regional accent, a mediocre headset, or a tendency to speak quickly under interview pressure. The average obscures the variance, and the variance is where the harm concentrates.

In a hiring context, transcription errors don't just produce cosmetic inaccuracies. They alter the meaning of answers. "I managed a team that grew revenue" becomes "I damaged a team that grew revenue." "I worked with REST APIs" becomes "I worked with wrestled APIs." These aren't hypothetical substitutions — they're the class of error that speech recognition systems make routinely when acoustic conditions or vocabulary fall outside the training distribution.

Current accuracy benchmarks across major AI transcription systems

| System / Context | WER (Clean Audio) | WER (Accented Speech) | WER (Technical Vocab) | WER (Noisy Conditions) |

|---|---|---|---|---|

| Leading general ASR (e.g., Whisper Large) | 3–5% | 8–20% | 7–15% | 12–30% |

| Enterprise interview platforms (avg.) | 5–8% | 12–25% | 10–18% | 15–35% |

| Human professional transcription | 1–3% | 3–8% | 3–7% | 5–12% |

| Domain-adapted ASR (HR-specific) | 3–6% | 9–18% | 5–12% | 10–25% |

These ranges reflect published research, third-party benchmarks, and internal validation data from enterprise deployments. They're not marketing numbers — and they're considerably worse than most vendors emphasize in sales materials. The clean-audio figures are often the ones cited. The accented speech and noisy condition figures are the ones that reflect actual hiring environments.

Where errors cluster and why it matters for evaluation

Not all transcription errors carry equal weight in a hiring assessment. A misheard filler word is noise. A misheard technical term during a skills-assessment question is a different matter entirely.

Proper nouns and technical terminology

Speech recognition models are trained on large corpora of general language. They handle common words well. They handle rare words, product names, programming languages, technical frameworks, and industry jargon poorly — particularly when spoken by someone with an accent that shifts phoneme distribution away from the training data. A candidate who says "Kubernetes" clearly may see it transcribed as "Cuba net is." A candidate discussing "LLM fine-tuning" may produce a transcript that reads as something phonetically adjacent but semantically meaningless.

The implication: any role where technical vocabulary is a primary signal for candidate evaluation carries elevated transcription risk. Engineering, data science, finance, legal, and medical hiring are all high-risk categories where word-level accuracy on technical terms is precisely the dimension that matters most.

Fast speech and speech under pressure

Candidates in interviews are often nervous. Nervous candidates speak faster. Faster speech degrades transcription accuracy, particularly at word boundaries where phonemes from adjacent words blend. The irony is that the interviews where candidates are most stressed — high-stakes roles, late-stage assessments, rapid-fire competency questioning — are also the interviews where transcription accuracy is most likely to degrade.

Disfluencies and non-standard syntax

Real speech is full of restarts, self-corrections, hedges, and non-standard constructions. AI transcription systems handle these inconsistently. Some systems aggressively clean up disfluencies in ways that alter the actual content of what was said. A candidate who says "I, uh, I think the most important thing — actually, let me rephrase — the core issue was process, not people" might end up with a transcript that omits the self-correction entirely. The analytical precision that self-correction demonstrated has been silently removed.

The bias problem hiding inside accuracy statistics

The most widely documented finding in AI transcription research is that error rates are not uniformly distributed across demographic groups. Multiple peer-reviewed studies have found that commercial speech recognition systems produce significantly higher error rates for speakers who are Black, have non-American English accents, speak with regional dialects, or are older. Research published across multiple academic institutions has found WER disparities of 15–40 percentage points between groups on identical content.

| Speaker Characteristic | Documented WER Impact | Hiring Risk |

|---|---|---|

| Non-native English accent | +8–20 pts above baseline | High — affects comprehension scoring |

| African American Vernacular English | +15–35 pts above baseline | Very high — documented in multiple studies |

| Regional US dialects (Southern, Appalachian) | +5–15 pts above baseline | Moderate to high |

| Older speakers (65+) | +8–18 pts above baseline | High — particularly for video interview AI |

| Standard American English, quiet conditions | Baseline (3–8%) | Low |

What this means for hiring: if your AI interview platform uses transcription as an input to scoring — whether for communication quality ratings, keyword matching, or automated competency assessment — candidates from certain demographic groups will systematically receive worse transcripts, which will systematically produce lower scores, which will systematically reduce their advancement rates. This is not a theoretical risk. It is a measurable bias channel embedded in the infrastructure of your hiring process.

The compounding factor is invisibility. Unlike an interviewer who might respond differently to a candidate's accent, a transcription-based scoring system appears objective. The score comes from an algorithm. The transcript looks like a neutral record. The bias is structural and invisible to anyone who doesn't know to look for it — which makes it harder to detect, harder to challenge, and harder to defend against in compliance or legal contexts.

How transcription errors propagate into hiring decisions

The error doesn't stop at the transcript. In modern AI-assisted hiring platforms, transcripts feed downstream processes: keyword extraction, sentiment analysis, communication quality scoring, competency tagging, and automated summaries that hiring managers review instead of watching full interviews. Each downstream process inherits and potentially amplifies the error.

Keyword and competency matching

Many AI interview platforms score candidates partly on whether their answers contain specific keywords or phrases associated with competencies. A candidate who clearly demonstrated strategic thinking but whose transcript contains three substitution errors in the relevant sentence may not trigger the keyword match. They get a lower competency score not because they lack the competency but because the transcription system couldn't parse their answer accurately.

Communication quality scoring

Some platforms use transcript coherence, sentence structure, and vocabulary metrics as proxies for communication quality. A transcript with a 20% WER will score worse on these metrics than the actual spoken answer warrants. A candidate who speaks clearly and confidently but whose accent produces elevated transcription errors will appear in the data as a weaker communicator — regardless of actual communication quality.

Automated summaries

When a hiring manager reviews an AI-generated summary of an interview rather than the full recording, they're reading a document that has already been through two transformation steps: transcription (with error rate) and summarization (with its own fidelity issues). If the transcript misrepresents what a candidate said about a key qualification, the summary will too — and the hiring manager will make a decision based on a record that doesn't accurately represent what happened in the interview.

Real hiring scenarios where transcription errors changed outcomes

The technical screen that screened out the expert

A senior data engineer with twelve years of experience applied for a principal role at a mid-size fintech company. The company used an AI video interview platform that scored candidates on technical keyword density and communication clarity. The engineer's answers were technically precise and well-structured, but she spoke with a strong Indian accent and used several technical terms — "Apache Kafka," "Spark streaming," "schema registry" — that the transcription system rendered inconsistently. Her keyword match score came in below threshold. She was auto-rejected at the AI screening stage. A recruiter who later reviewed the video recording as part of a process audit flagged her as a top candidate. She had been eliminated by a transcription failure, not a competency gap.

The communication quality score that penalized fluency in a second language

A sales candidate who was a fluent speaker of English as a second language completed a video interview for a regional account executive role. His spoken answers were clear, structured, and persuasive. The transcription system produced a WER of approximately 18% due to his accent, generating lower scores on the platform's "verbal clarity" and "articulation" metrics. He advanced only after a recruiter who happened to watch the video flagged the discrepancy between the video quality and the AI assessment. Without that intervention, the automated score would have ended his candidacy.

The self-correction that became a contradiction

A product manager candidate was asked about a product failure they had navigated. Her answer included a careful self-correction: she started to describe it as a team failure, then reframed it as a process failure with clearer attribution. The transcript cleaned up the disfluency in a way that made her answer appear contradictory. The hiring manager's summary flagged "inconsistent self-awareness" as a concern. The concern didn't exist in the actual interview.

What good transcription accuracy looks like in practice

Vendor benchmarks and academic WER figures are useful reference points, but they don't tell you what accuracy level is actually acceptable for your specific use case. The right threshold depends on how transcription is being used and how much weight it carries in the decision.

| Use Case | Acceptable WER | Required WER | Why |

|---|---|---|---|

| Human reference / review aid | Up to 15% | Below 10% | Reviewer can cross-check against video |

| Keyword / competency scoring | Below 8% | Below 5% | Errors directly affect score |

| Communication quality scoring | Below 5% | Below 3% | Metric is extremely sensitive to WER |

| Auto-summary for HM review | Below 8% | Below 5% | No human review of source transcript |

| Compliance / legal record-keeping | Below 3% | Professional human review | Accuracy required for legal defensibility |

The practical implication: most AI interview platforms are not operating at the accuracy levels required for the scoring functions they're performing. A platform with a general WER of 7–10% using transcription as a direct input to communication quality scores is applying a noisy measurement to a sensitive evaluation. The scores will appear precise — a decimal point on a scale — while being built on a foundation with meaningful error rates.

The compliance and legal exposure angle

AI hiring tools are increasingly under regulatory scrutiny. In the United States, the EEOC has issued guidance on AI hiring tools and adverse impact. New York City's Local Law 144 requires bias audits for automated employment decision tools. Illinois' AI Video Interview Act mandates transparency about how AI analyzes candidates in video interviews. The European Union AI Act classifies AI systems used in employment decisions as high-risk, with corresponding requirements for accuracy, transparency, and human oversight.

Transcription accuracy sits at the center of several compliance dimensions. The specific legal risks include:

- Disparate impact claims: If candidates from protected classes advance at lower rates through AI-screened interviews, and transcription accuracy disparities contribute to that difference, the selection method may fail the 4/5ths rule or similar adverse impact tests.

- Transparency violations: Several jurisdictions require employers to disclose when AI is used in hiring decisions. If the role of transcription in scoring is not disclosed, that disclosure may be incomplete.

- Accuracy representation: Vendor contracts that represent AI accuracy at benchmark levels may not hold up against real-world performance data from your specific candidate population.

- Record accuracy requirements: In jurisdictions where interview records may be required for compliance purposes, transcripts with material error rates may not constitute accurate records of what was said.

How to audit your AI interview platform's transcription accuracy

Most hiring teams have never conducted a transcription accuracy audit. Most don't know their platform's actual WER for their candidate population. The methodology is straightforward even if the execution requires some effort.

Step 1: Build a ground truth sample

Select 50–100 completed interviews from your recent pipeline, distributed across role types, seniority levels, and candidate backgrounds. Have a human transcriber produce accurate transcripts for each interview. These become your ground truth.

Step 2: Calculate actual WER against ground truth

Compare the AI-generated transcripts against your human transcripts using WER calculation. The open-source tool jiwer in Python automates this. Record WER per interview, not just overall average.

Step 3: Segment by relevant variables

Look at WER distribution across role types, interview conditions (remote vs. in-person, device type), and any demographic proxies available to you. You're looking for whether error rates cluster in ways that correlate with protected characteristics.

Step 4: Map errors to scoring impact

Take a subset of high-WER transcripts and manually review which errors affected keyword matches, competency scores, or communication quality ratings. Quantify the score impact. This tells you not just how inaccurate the transcription is, but how much that inaccuracy is moving outcomes.

Step 5: Review vendor SLAs and accuracy guarantees

Compare your actual WER data against whatever accuracy claims your vendor has made. If there's a material gap — particularly for specific candidate populations — that gap is both a contract issue and a process risk that needs to be addressed before your next hiring cycle.

Process design choices that reduce transcription risk

Use transcripts as reference, not source of truth

The highest-risk use of AI transcription is when the transcript — rather than the actual interview recording — becomes the primary record that evaluators act on. Requiring evaluators to watch or listen to at least the relevant segments of interviews before making final decisions eliminates the path by which transcription errors become outcome-determinative.

Flag high-WER transcripts for human review

Most AI transcription systems can produce a confidence score alongside the transcript. Low confidence scores correlate with higher WER. Building a workflow that flags low-confidence transcripts for human review before they feed into scoring catches the highest-risk cases without requiring human review of every transcript.

Separate transcription-based and human-evaluation metrics

If your platform produces composite scores that blend AI transcription-derived metrics with human assessor ratings, make those components visible separately. A candidate who scores low on automated communication quality but high on human assessor ratings is a signal that the transcription layer may be depressing the automated score.

Validate scoring thresholds on diverse candidate samples

If you use pass/fail thresholds on AI scores for automated progression, validate those thresholds against a sample that reflects the demographic diversity of your actual candidate pool. A threshold calibrated on a predominantly native English-speaking validation sample may be systematically mis-calibrated for a more diverse pool.

Audit for accent and dialect bias specifically

Given the documented disparities in transcription accuracy by accent and dialect, a specific audit for this dimension is warranted. If your candidate pool includes significant proportions of non-native English speakers or speakers with regional dialects, you're operating in the highest-risk zone for transcription-based bias.

What to ask your AI interview platform vendor

- What is your system's WER on standard benchmarks, and which benchmarks specifically?

- What is your documented WER for non-native English speakers and speakers with regional accents?

- Have you conducted disparate impact testing on transcription accuracy across demographic groups? Can you share the results?

- Which downstream scoring functions use transcription as a direct input?

- What confidence score thresholds trigger human review in your system?

- Has your system been independently audited for accuracy, and by whom?

- What accuracy guarantees are in your SLA, and what remedies apply if accuracy falls below threshold in production?

- Does your system use domain-adapted models for specialized vocabularies (technical, medical, legal), and how were those models validated?

A vendor who can answer these questions with specific data is operating with appropriate transparency. A vendor who deflects to marketing benchmarks or claims universal high accuracy without segmented data is giving you a clear signal about what their system actually performs like in practice.

Accuracy, fairness, and the business case for getting this right

There's a tendency to frame transcription accuracy in hiring as a compliance risk to be managed — a legal exposure to be mitigated. That framing is accurate but incomplete. The fuller picture is that transcription errors in AI interviews are also a talent quality problem. Every time a highly qualified candidate is eliminated by a transcription failure, you lose a potential hire. Every time a less qualified candidate advances because their speech patterns produce cleaner transcripts, your hiring bar erodes.

The candidates most likely to be disadvantaged by transcription errors — non-native English speakers, speakers with regional accents, candidates from diverse linguistic backgrounds — are often the candidates who bring exactly the range of perspective and experience that high-performing organizations benefit from. Transcription-based bias doesn't just expose you to legal risk. It actively narrows your talent pool in ways that compound over hiring cycles.

Getting transcription accuracy right — auditing your actual error rates, understanding where errors concentrate, building processes that don't let errors unilaterally determine outcomes — is both the ethical path and the commercially sensible one.

Frequently asked questions

For transcripts used only as a human reference aid, a WER below 10% is generally acceptable. For transcripts that directly feed into automated scoring — keyword matching, communication quality metrics, competency tagging — you need WER below 5%, and ideally below 3%. For any use in compliance records or legal proceedings, professional human transcription remains the appropriate standard. Most commercial AI interview platforms operate in the 5–12% WER range on realistic candidate audio, which means they're acceptable for reference but risky for direct scoring without human validation.

Yes, significantly. Research consistently shows that commercial ASR systems produce higher error rates for non-native English accents, African American Vernacular English, and several regional dialects. The disparity ranges from roughly 8 percentage points above baseline for moderate accents to 30+ percentage points for strong accents in noisy conditions. In hiring, this matters because if transcription accuracy is lower for certain speaker groups and transcription feeds into scoring, those groups will systematically receive lower scores — creating a bias channel in the hiring process that appears objective but is structurally unfair.

Requirements vary by jurisdiction. Illinois' AI Video Interview Act requires employers to disclose AI analysis of video interviews and obtain consent. New York City's Local Law 144 requires bias audits for automated employment decision tools. The EU AI Act classifies employment AI as high-risk with transparency requirements. Even where specific transcription disclosure isn't mandated, general AI-in-hiring disclosure requirements in multiple jurisdictions likely encompass transcription-based scoring systems. Apply a disclosure-first standard as a baseline and consult employment counsel for your specific jurisdictions.

The most reliable method is to compare the candidate's AI-generated transcript against the actual interview recording for the answers that contributed most to their score. Look for substitution errors on technical terms, dropped or altered content in key competency answers, and any flagged concerns in the evaluation that seem inconsistent with the video. If your platform provides confidence scores per segment, low-confidence segments in evaluation-relevant answers are the highest-priority areas to review. This process is time-intensive for large volumes but practical for any candidate decision you want to validate or defend.

First, pull the original interview recording and compare it against the transcript for the specific answers that contributed to the score. If you find material errors — particularly in technical vocabulary, competency-relevant answers, or anything that contributed to a scored concern — document what you find and escalate to your recruiting leadership. The candidate's application should be re-reviewed based on the actual interview content, not the errored transcript. Long-term, incidents like this are exactly the kind of case data that should drive a formal transcription accuracy audit of your platform.

Yes. Domain adaptation — fine-tuning a base transcription model on audio samples specific to your industry vocabulary and, where ethically collected, diverse speaker populations — can reduce WER substantially for high-error cases. Some enterprise platforms offer domain-adapted models for technical roles. For accent and dialect improvement, the requirement is diverse training data, which some vendors are actively working to expand. If you're running high-volume hiring with a diverse candidate population, ask your vendor specifically whether domain-adapted models are available and what accuracy improvement they demonstrate for your target speaker populations.

The impact depends on which scoring dimensions use transcription as an input. Keyword and competency matching is directly affected — if relevant terms are transcribed incorrectly, the match fails regardless of whether the candidate actually said the right thing. Communication quality scores derived from transcript coherence and vocabulary metrics are sensitive to WER because errors degrade apparent coherence. Automated summaries inherit transcript errors and can introduce additional distortion in summarization. Sentiment analysis applied to transcripts is affected because sentiment signals often reside in specific word choices that errors may alter.

Build AI-powered hiring that screens candidates fairly — accurate, auditable, and bias-aware.

Try for free.png)

.jpg)

.png)